Glass Box vs. Black Box: The AI Governance Question Collections Leaders Can't Ignore

As agentic AI transforms collections, the critical differentiator isn't capability -- it's governance.

Key Takeaways

- Agentic AI represents a generational leap in collections technology -- but demands an equally significant leap in governance

- Black box AI creates regulatory risk, amplifies bias, erodes customer trust, and produces an "initial bump" that plateaus

- Glass box AI governs every decision through a traceable behavioral science framework -- from data ingestion to outcome measurement

- Regulatory frameworks across the US, UK, and Canada increasingly require explainability and fair treatment in AI-driven decisions

- The competitive differentiator is shifting from "do you have AI?" to "how well is your AI governed?"

McKinsey estimates generative AI could create $200-340 billion in annual value across banking. The trajectory is clear -- major financial institutions are deploying AI assistants at scale. These aren't experiments; they're core infrastructure. But as AI capabilities proliferate across the collections industry, a critical question emerges: How do you govern AI in an environment where every decision has regulatory, reputational, and customer relationship implications?

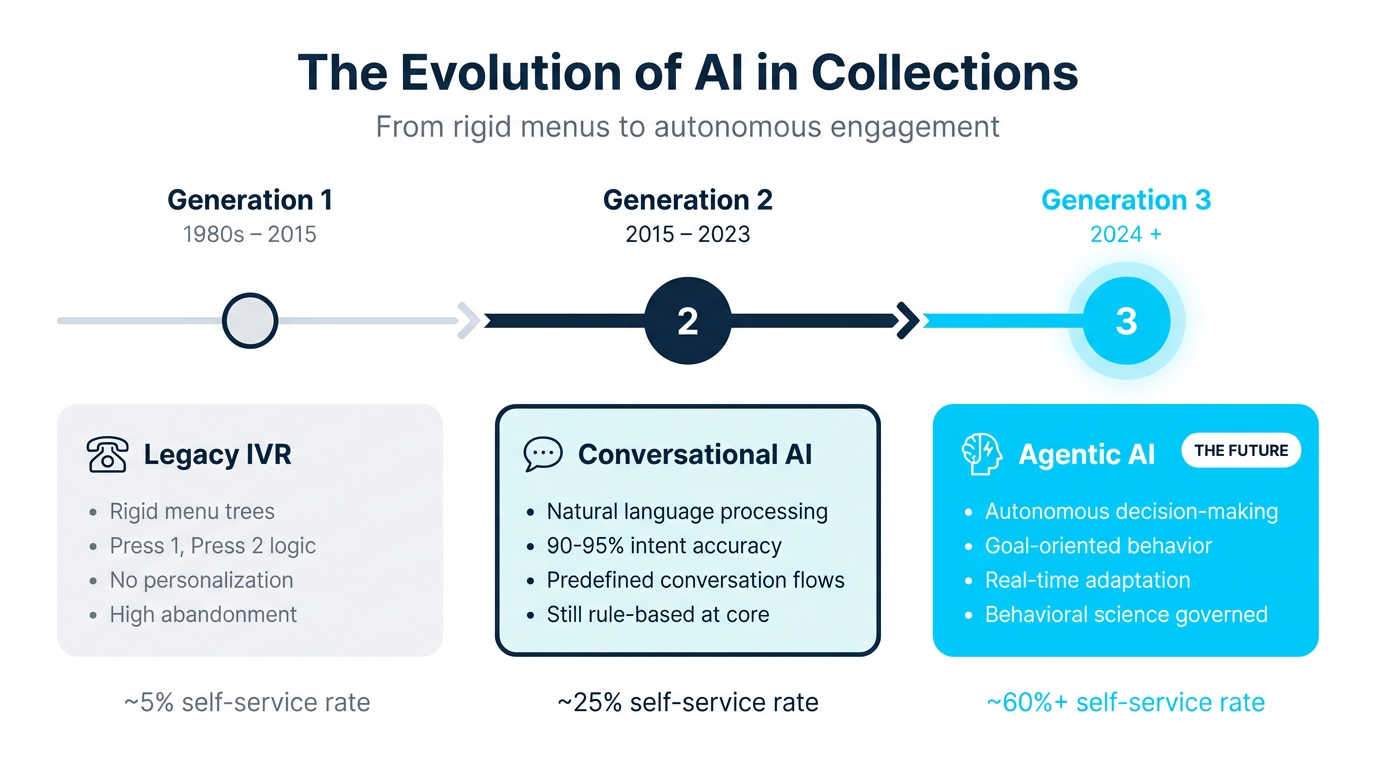

The Evolution: IVR to Conversational AI to Agentic AI

The collections industry's relationship with AI has evolved through three distinct generations, each representing a fundamental shift in capability -- and in governance requirements.

Generation 1: Legacy IVR (1980s-2015)

The first generation of automated customer interaction in collections relied on Interactive Voice Response systems. These were rigid, menu-driven systems that routed calls through predetermined decision trees. "Press 1 for account balance. Press 2 to make a payment." The technology was simple, the governance straightforward -- every possible path was pre-defined by humans. Self-service rates hovered around 5%, and customers who didn't fit neatly into the menu options were routed to live agents or, more often, simply hung up.

Generation 2: Conversational AI (2015-2023)

Natural language processing transformed the interaction model. Instead of navigating rigid menus, customers could speak or type naturally. Intent accuracy reached 90-95%, and self-service rates climbed to approximately 25%. But beneath the conversational interface, the underlying logic remained largely predefined. Flows were scripted by designers. The AI understood what customers said but didn't autonomously decide what to do about it. Governance was manageable because the system's decision space was bounded.

Generation 3: Agentic AI (2024+)

Agentic AI represents a fundamentally different paradigm. These are goal-oriented autonomous agents capable of multi-step planning, real-time decision-making, and adaptive behavior. They don't follow scripts -- they pursue objectives. In collections, this means an AI agent that can assess a customer's situation, determine the optimal intervention strategy, select the right message framing, choose the ideal channel and timing, and adjust its approach based on the customer's response -- all without human intervention. When governed by behavioral science, self-service rates can reach 60% or higher.

Agentic AI represents a fundamental shift. With it comes unprecedented capability -- and unprecedented governance challenges. The question is no longer whether AI can make these decisions. It's whether we can ensure it makes them responsibly.

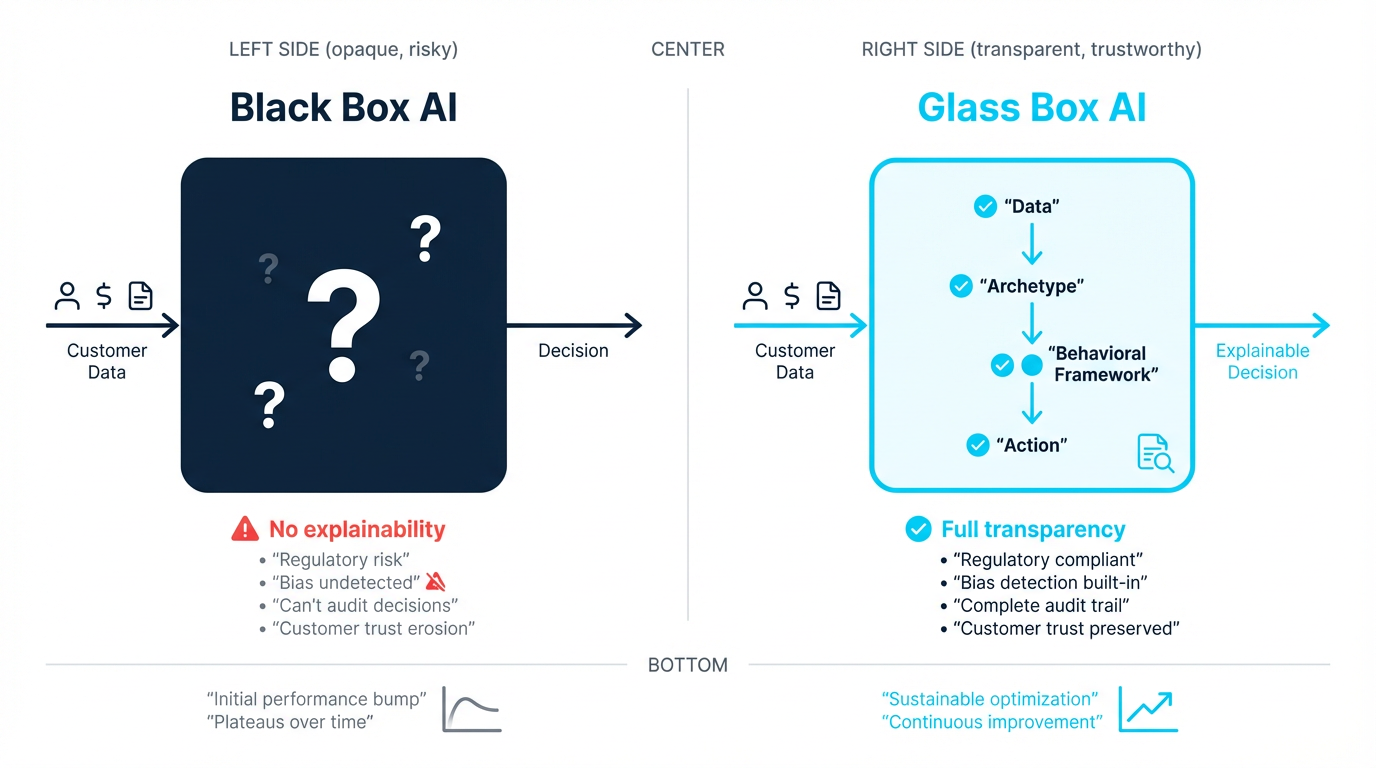

The Black Box Problem

Black box AI in collections processes customer data and produces engagement decisions — message, channel, timing — through opaque reasoning that cannot be explained or audited. For a heavily regulated, consumer-facing application, this creates four compounding risks: regulatory non-compliance when authorities demand decision rationale, bias amplification from historical data patterns, customer trust erosion from poorly-timed outreach, and performance plateaus from the Initial Bump Trap.

1. Regulatory Risk

Regulators are increasingly explicit about their expectations for AI transparency. In January 2025, Federal Reserve Vice Chair for Supervision Michael Barr delivered a speech specifically addressing AI governance in financial services, emphasizing that institutions must be able to explain how AI-driven decisions are made. The FCA's Consumer Duty in the UK requires firms to demonstrate that their processes deliver good outcomes for customers -- a requirement that's difficult to meet when you can't explain how your AI selects its engagement strategies. In Canada, PIPEDA's accountability principle and Quebec's Law 25 both establish expectations for transparency in automated decision-making that black box systems struggle to satisfy.

2. Bias Amplification

AI systems trained on historical collections data risk encoding and amplifying existing biases. If past collection strategies disproportionately used aggressive tactics with certain demographic groups, a black box AI may replicate those patterns at scale -- without anyone detecting it until regulatory scrutiny or customer complaints surface. Without visibility into the decision-making process, identifying and correcting bias becomes a reactive exercise rather than a proactive one.

3. Customer Trust Erosion

Customers in financial difficulty are already in a vulnerable state. When they receive communications that feel arbitrary, poorly timed, or tonally inappropriate, trust erodes quickly. A black box system that sends a cheerful payment reminder to a customer who just lost their job, or an aggressive demand to someone actively trying to negotiate, doesn't just fail that individual interaction -- it damages the entire customer relationship. Without understanding why the AI chose that approach, organizations can't prevent these failures from recurring.

4. The Initial Bump Trap

Perhaps the most insidious problem with black box AI in collections is what we call the "initial bump trap." When organizations first deploy AI-driven communications, they typically see an immediate improvement in response rates. The novelty of personalized-seeming messages, optimized timing, and multi-channel engagement drives short-term gains. But without a governed framework for continuous learning and adaptation, these gains plateau -- or worse, decline as customers adapt to the AI's patterns. Organizations mistake the initial bump for sustained improvement and don't realize they've hit a ceiling until the data makes it undeniable.

The Glass Box Alternative

Glass box AI is an approach to AI governance in collections where every decision — customer classification, message selection, channel and timing — is explainable, auditable, and traceable to a behavioral science rationale. Unlike black box AI, which produces outputs through opaque optimization, glass box AI lets organizations answer the question: "Why did this customer receive this communication?" with a complete decision chain.

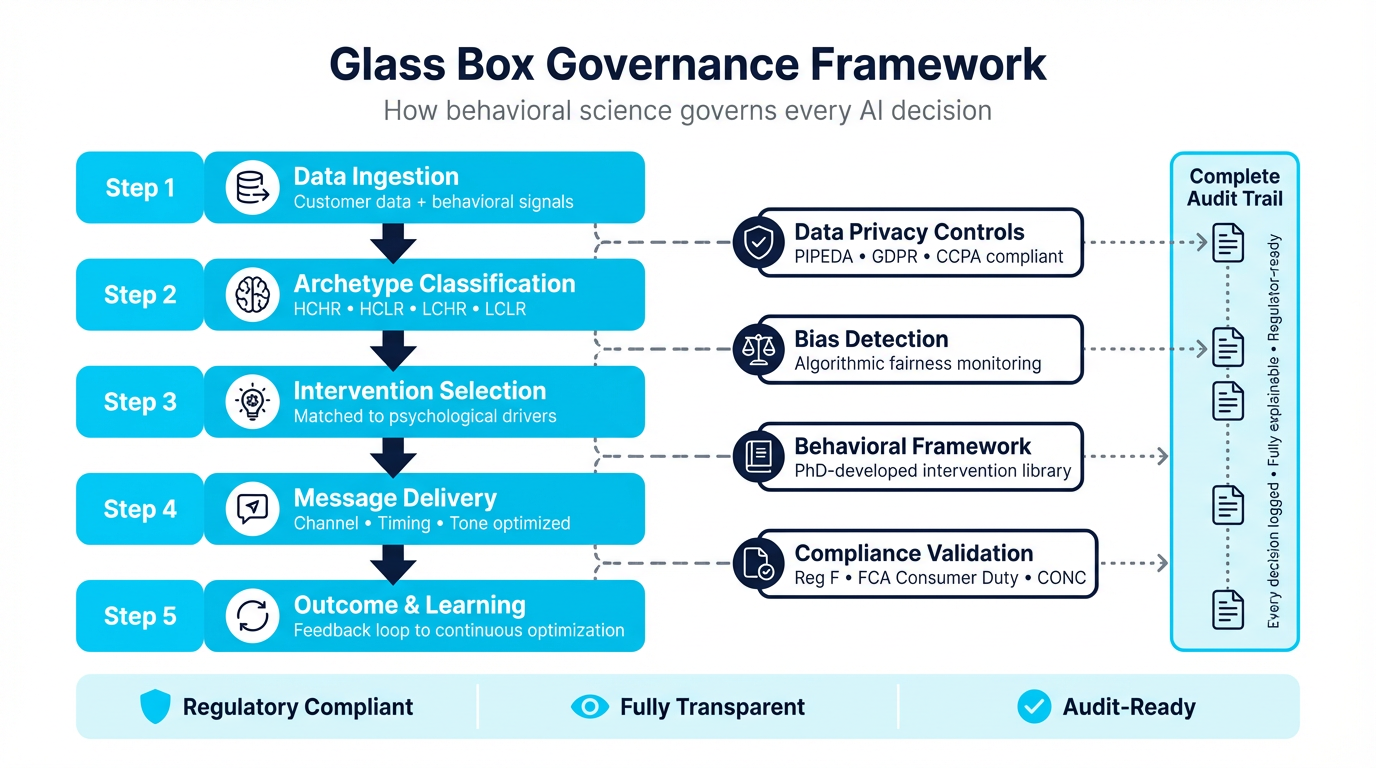

The glass box governance framework operates through five interconnected steps:

Step 1: Data Ingestion

Customer data and behavioral signals flow into the system through strict data privacy controls. This includes traditional account data -- balance, tenure, payment history -- alongside behavioral signals such as channel engagement patterns, time-of-day responsiveness, prior interaction outcomes, and digital behavior indicators. Privacy governance is applied at the point of ingestion, not as an afterthought, ensuring that only permissible data informs downstream decisions.

Step 2: Archetype Classification

Rather than simple risk scoring, the system classifies customers into behavioral archetypes based on two dimensions: capacity to pay and readiness to act. This produces four primary archetypes -- High Capacity/High Readiness (HCHR), High Capacity/Low Readiness (HCLR), Low Capacity/High Readiness (LCHR), and Low Capacity/Low Readiness (LCLR). Each archetype has distinct psychological drivers that inform engagement strategy. Critically, bias detection algorithms continuously monitor classification patterns to ensure that archetype assignment correlates with behavioral signals rather than demographic characteristics.

Step 3: Intervention Selection

Once a customer's archetype is identified, the system matches them to intervention strategies drawn from a behavioral science framework. For an HCLR customer (can pay but isn't motivated), the intervention might leverage loss aversion framing -- emphasizing what the customer stands to lose by not acting. For an LCHR customer (wants to pay but faces constraints), the approach might focus on cognitive load reduction -- simplifying options and removing friction from the payment process. Every intervention is grounded in established behavioral economics principles, not generated ad hoc by the AI.

Step 4: Message Delivery

Channel selection, timing, and tone are optimized based on the customer's demonstrated preferences and the intervention strategy. A compliance validation layer reviews every outgoing communication against regulatory requirements -- CFPB Reg F frequency limits, FCA fair treatment obligations, PIPEDA consent requirements -- before delivery. This is where the rubber meets the road: the AI's autonomy is bounded by both behavioral science principles and regulatory constraints.

Step 5: Outcome and Learning

Every interaction outcome feeds back into the system through a structured feedback loop. Did the customer engage? Pay? Request a callback? Ignore the message? Complain? These outcomes are attributed to specific intervention strategies, enabling continuous optimization. But unlike black box optimization, the learning is transparent -- the system can explain not just that a new approach works better, but why it works better, grounded in behavioral science principles.

The contrast between the two approaches is stark:

- Black Box: AI generates a communication. "Why this message?" No clear answer. The system optimized for a metric, and this is what it produced.

- Glass Box: Behavioral scientists develop intervention libraries grounded in established research. AI matches customers to strategies based on behavioral signals. Every decision can be traced: "This customer was classified as HCLR based on these signals, matched to a loss-aversion intervention because of these behavioral indicators, delivered via SMS at 10:15 AM because prior engagement data showed peak responsiveness at this time, with compliance validation confirming adherence to Reg F frequency requirements."

Regulatory Alignment Across Jurisdictions

AI-driven debt collection platforms must comply with distinct regulatory frameworks across three major markets: in the US, CFPB Regulation F governs communication frequency and validation requirements; in the UK, the FCA's Consumer Duty (effective 2023) requires explainable customer treatment decisions; in Canada, PIPEDA's accountability principle and Quebec's Law 25 mandate transparency in automated decision-making. Glass box AI builds compliance validation into every decision, not as a post-hoc filter.

United States. The CFPB's Regulation F establishes specific requirements for debt collection communications, including frequency limits (7 calls within 7 days per account), validation notice requirements, and prohibitions on harassment. State-level privacy laws -- particularly the California Consumer Privacy Act (CCPA) and its amendments -- add data handling requirements. Glass box governance ensures every communication is validated against these rules before delivery and maintains the audit trail regulators expect.

United Kingdom. The FCA's Consumer Duty (effective July 2023) requires firms to demonstrate that their processes deliver good outcomes for retail customers. The April 2024 policy statement PS24/2 strengthened protections for borrowers in financial difficulty, explicitly requiring firms to show how they identify vulnerability and tailor their approach. Glass box AI's archetype classification and intervention matching directly addresses these requirements -- every customer's treatment can be explained and justified.

Canada. PIPEDA's accountability principle requires organizations to be responsible for personal information under their control, including information processed by automated systems. Quebec's Law 25 (fully effective September 2024) introduced explicit requirements for transparency in automated decision-making. OSFI guidelines B-10 and B-13 establish expectations for third-party risk management that extend to AI vendors. Glass box governance provides the transparency and accountability these frameworks demand.

Implementation Considerations

Moving from black box to glass box AI governance is not simply a technology upgrade. It requires organizational capabilities that many collections operations lack today.

Behavioral Science Expertise. Glass box AI requires PhD-level behavioral science expertise to develop and maintain the intervention frameworks that govern AI decisions. This isn't about adding a "behavioral nudge" layer to existing technology -- it's about building the foundational methodology that determines how AI engages with customers. Organizations need researchers who understand loss aversion, present bias, cognitive load theory, and social proof not as abstract concepts but as practical tools for designing customer engagement strategies.

Continuous Testing. A glass box framework demands rigorous, ongoing experimentation. Intervention strategies must be tested across different customer segments, market conditions, and regulatory environments. Unlike A/B testing a subject line, this involves testing the underlying behavioral hypotheses -- does loss aversion framing actually outperform social proof for HCLR customers in the UK telecom market? The testing infrastructure must be built into the platform, not bolted on.

Audit Trail Infrastructure. Every decision the AI makes must be logged, explainable, and retrievable. When a regulator asks why a specific customer received a specific message at a specific time, the organization must be able to produce a complete decision chain -- from data inputs through archetype classification, intervention selection, and compliance validation. This requires purpose-built logging infrastructure that most general-purpose AI platforms lack.

Human Oversight. Glass box AI doesn't eliminate human judgment -- it structures it. Behavioral scientists design the intervention libraries. Compliance teams define the regulatory guardrails. Operations leaders set the business objectives. The AI operates within these human-defined boundaries, not outside them. Hybrid approaches that combine AI autonomy with human oversight at critical decision points deliver better outcomes than either fully automated or fully manual processes.

Pre-Approved Content. In a glass box framework, the AI doesn't generate novel content for each customer interaction. Instead, it selects from libraries of pre-approved messages that have been developed by behavioral scientists, reviewed by compliance, and validated through testing. This eliminates the hallucination and brand-risk challenges associated with generative AI in customer-facing applications while still enabling highly personalized engagement through strategic selection and combination of proven content elements.

The Competitive Advantage

The competitive advantage of glass box AI governance is threefold: regulatory resilience as CFPB, FCA, and PIPEDA requirements for AI explainability tighten; sustained performance by avoiding the Initial Bump Trap through genuine behavioral learning rather than contact optimization; and preserved customer lifetime value by governing collections interactions to protect the long-term relationship, not just the immediate recovery.

Organizations that invest in glass box governance gain advantages across three dimensions:

- Regulatory resilience. As regulatory requirements for AI transparency tighten -- and they will -- glass box organizations are ahead of the curve rather than scrambling to retrofit explainability.

- Sustained performance. By avoiding the initial bump trap and building genuine behavioral intelligence, glass box approaches deliver compounding returns rather than diminishing ones.

- Customer lifetime value. Collections interactions that respect the customer relationship -- governed by behavioral science rather than pure optimization -- preserve the possibility of a future relationship rather than burning it for short-term recovery.

"Behavioral science is the lens. AI is the horsepower. Governance is what keeps it on the road."

Practical Questions for Evaluation

For collections leaders evaluating AI governance approaches, these six questions can help distinguish glass box from black box:

- Can you explain why a specific customer received a specific message? A glass box system produces a complete decision chain. A black box system points to an algorithm and a metric.

- How does your AI detect and prevent bias in customer treatment? Look for continuous monitoring at the archetype classification level, not just post-hoc analysis of outcomes.

- What happens when a regulator asks for an audit trail of AI-driven decisions? Glass box systems maintain decision logs by design. Black box systems require significant engineering effort to produce them retrospectively.

- How does your system avoid the initial bump trap? Ask about behavioral science methodology, continuous experimentation frameworks, and how the system evolves its approach over time -- not just how it optimizes within existing patterns.

- What role do behavioral scientists play in governing AI decisions? In a glass box framework, behavioral science expertise is structural, not advisory. If the vendor's behavioral science team is separate from the AI engineering team, the governance may be cosmetic.

- How does your system handle regulatory differences across jurisdictions? Glass box governance builds compliance validation into the decision process. Black box systems typically apply compliance as a filter after the AI has already made its decision.

The answers to these questions will reveal whether an organization's AI governance is genuinely transparent or merely marketed as such. In an industry where every AI-driven decision has regulatory, reputational, and customer relationship implications, the distinction matters—whether you're managing delinquency in auto financing, credit unions, or financial services. For a complete platform evaluation framework covering capabilities beyond governance, see the Enterprise Debt Collection Software Buyer's Guide.

Ready to see glass box AI governance in action?

See how Symend's behavioral science-governed platform delivers transparent, auditable, and continuously improving collections engagement.

REQUEST A DEMOFrequently Asked Questions

Glass box AI is an approach to artificial intelligence in collections where every decision the system makes -- from customer classification to message selection to channel timing -- is explainable, auditable, and traceable. Unlike black box AI, which produces outputs through opaque processes, glass box AI governs decisions through a transparent behavioral science framework. This means organizations can explain exactly why a specific customer received a specific communication, satisfying regulatory requirements and enabling continuous, evidence-based improvement.

The Initial Bump Trap describes a pattern where organizations deploying black box AI in collections see an immediate improvement in response and recovery rates, but those gains plateau or decline over time. The initial improvement comes from the novelty of AI-optimized messaging and timing, but without a governed behavioral science framework for continuous learning and adaptation, the system cannot evolve as customer behavior changes. Organizations often mistake the initial bump for sustained performance and don't realize they've hit a ceiling until the data makes it undeniable.

Glass box AI builds compliance into the decision process rather than applying it as a filter after decisions are made. Every outgoing communication is validated against regulatory requirements -- such as CFPB Reg F frequency limits in the US, FCA Consumer Duty fair treatment obligations in the UK, and PIPEDA accountability and transparency principles in Canada -- before delivery. The system maintains a complete audit trail of every decision, from data inputs through archetype classification, intervention selection, and compliance validation, so organizations can respond to regulatory inquiries with full transparency.

Conversational AI (Generation 2) uses natural language processing to understand what customers say and respond within predefined flows. It improved self-service rates to approximately 25% but still operated within scripted decision trees. Agentic AI (Generation 3) represents a fundamental shift: these are goal-oriented autonomous agents capable of multi-step planning and real-time decision-making. Rather than following scripts, agentic AI pursues objectives -- assessing a customer's situation, selecting an intervention strategy, choosing the optimal channel and timing, and adapting based on the customer's response, all autonomously. This capability is what makes governance so critical.

Glass box AI requires PhD-level behavioral science expertise because the intervention frameworks that govern AI decisions must be grounded in established research on loss aversion, present bias, cognitive load theory, social proof, and other behavioral economics principles. These aren't abstract concepts -- they're practical tools that determine how the AI classifies customers into behavioral archetypes, selects intervention strategies, and frames communications. Without genuine behavioral science methodology at the foundation, "glass box" becomes marketing language rather than a functional governance framework. The behavioral scientists develop and maintain the intervention libraries, design the experimentation protocols, and ensure the AI's learning is aligned with established science.